TypingMind×

TypingMind× xAI (Grok)

xAI (Grok)About xAI (Grok)

Grok is xAI's flagship family of large language models designed to deliver truthful and insightful AI responses. The xAI API provides developers with access to powerful models including Grok-4 and Grok-2-1212 (with a 131K token context window), supporting multimodal capabilities like vision processing and image generation via Flux.1. Key features include function calling for API automation, compatibility with OpenAI/Anthropic SDKs, structured outputs, and enterprise-grade security with GDPR/HIPAA compliance. The platform offers Python and JavaScript SDKs for easy integration into applications ranging from conversational AI to complex workflow automation.

Step by step guide to use xAI API Key to chat with AI

1. Get Your xAI API Key

First, you'll need to obtain an API key from xAI. This key allows you to access their AI models directly and pay only for what you use.

- Visit xAI's API console

- Sign up or log in to your account

- Navigate to the API keys section

- Generate a new API key (copy it immediately as some providers only show it once)

- Save your API key in a secure password manager or encrypted note

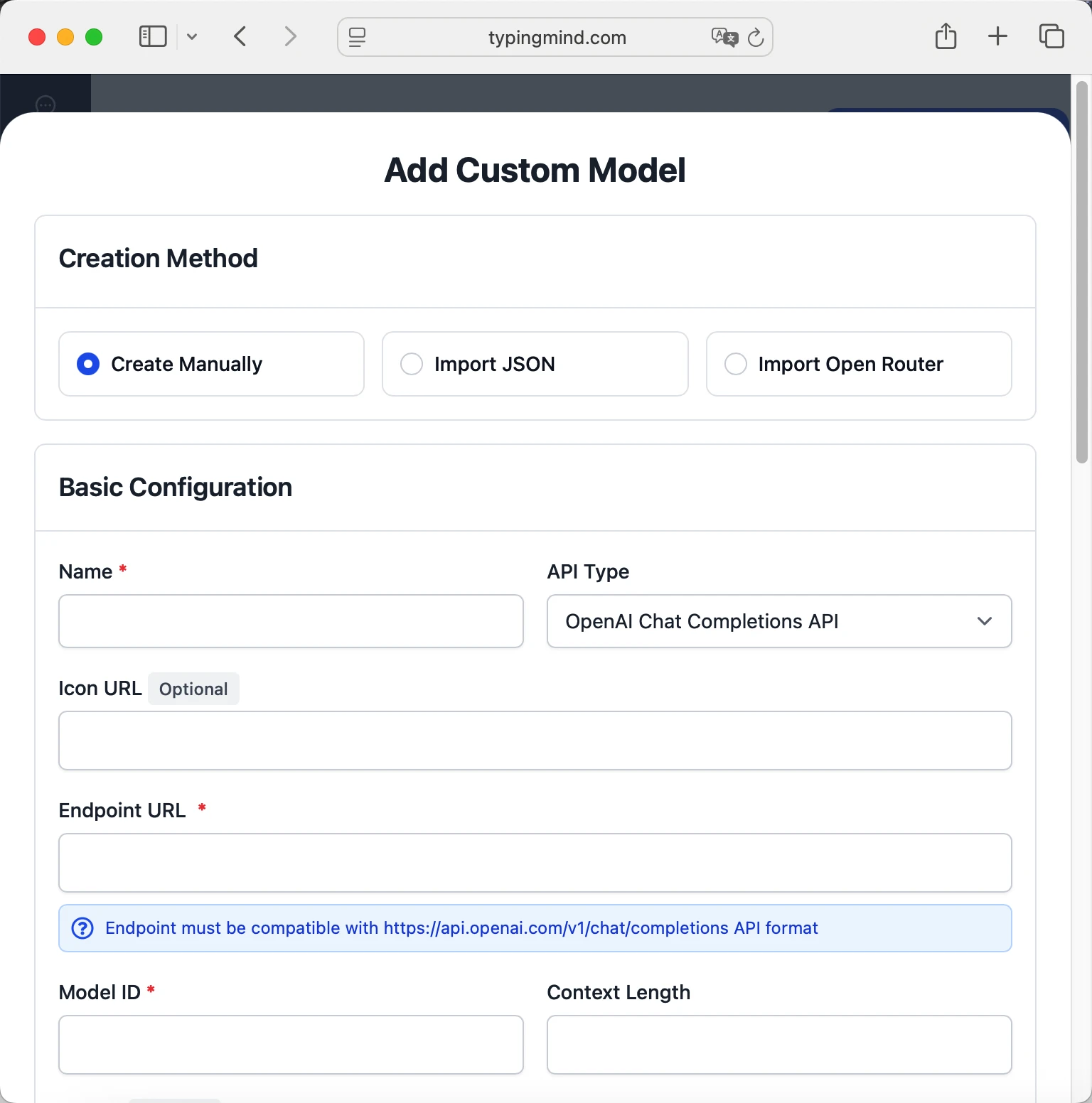

2. Connect Your xAI API Key on TypingMind

Once you have your xAI API key, connecting it to TypingMind to chat with AI is straightforward:

- Open TypingMind in your browser

- Click the "Settings" icon (gear symbol)

- Navigate to "Models" section

- Click "Add Custom Model"

- Fill in the model information:Name:

grok-4-0709 via xAI(or your preferred name)Endpoint:https://api.x.ai/v1/chat/completionsModel ID:grok-4-0709for example (check xAI model list)Context Length: Enter the model's context window (e.g., 32000 for grok-4-0709) grok-4-0709https://api.x.ai/v1/chat/completionsgrok-4-0709 via xAIhttps://www.typingmind.com/model-logo.webp32000

grok-4-0709https://api.x.ai/v1/chat/completionsgrok-4-0709 via xAIhttps://www.typingmind.com/model-logo.webp32000 - Add custom headers by clicking "Add Custom Headers" in the Advanced Settings section:Authorization:

Bearer <GROK_API_KEY>:X-Title:typingmind.comHTTP-Referer:https://www.typingmind.com - Enable "Support Plugins (via OpenAI Functions)" if the model supports the "functions" or "tool_calls" parameter, or enable "Support OpenAI Vision" if the model supports vision.

- Click "Test" to verify the configuration

- If you see "Nice, the endpoint is working!", click "Add Model"

3. Start Chatting with xAI models

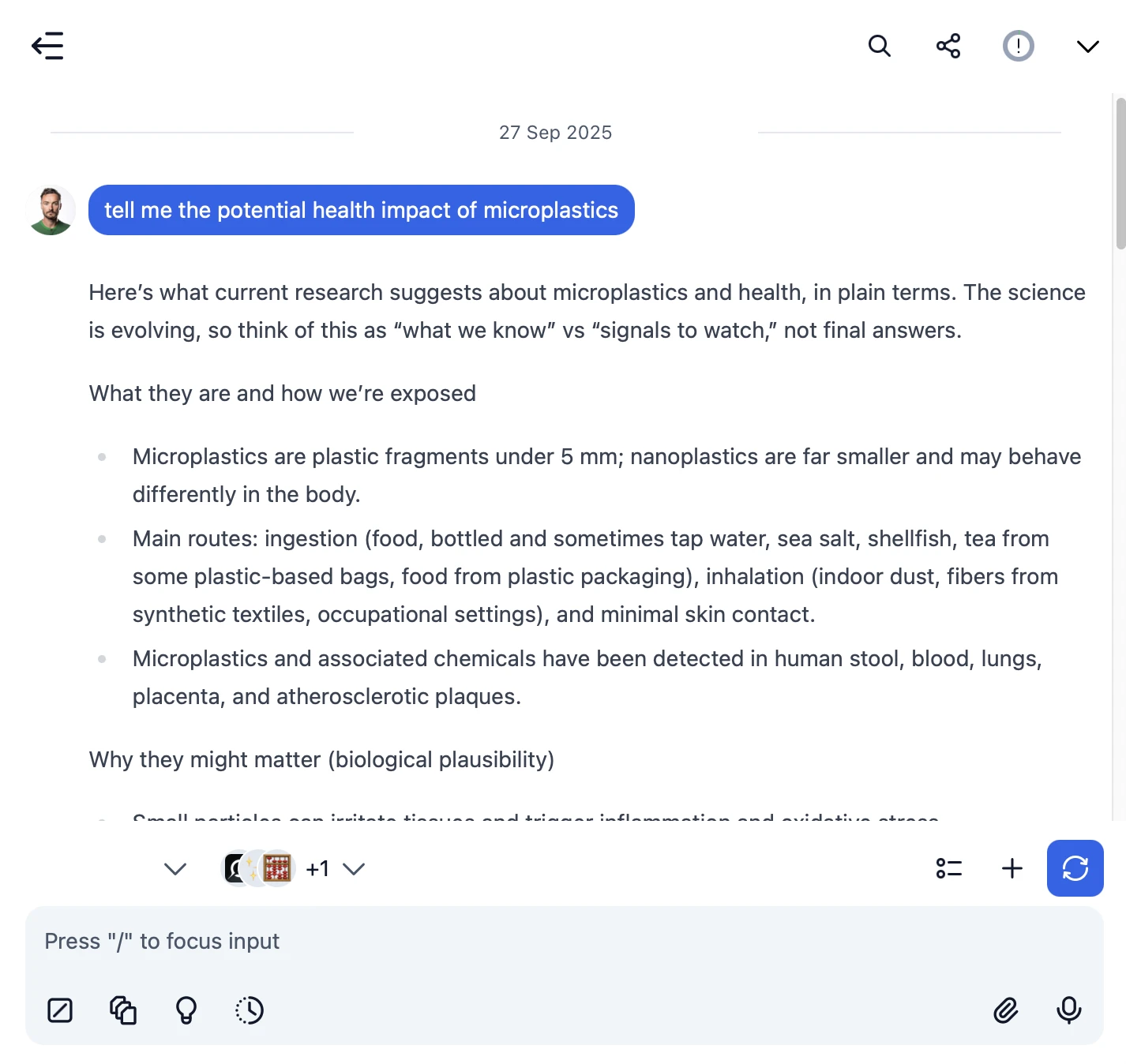

Now you can start chatting with xAI (Grok) models through TypingMind:

- Select your preferred xAI model from the model dropdown menu

- Start typing your message in the chat input

- Enjoy faster responses and better features than the official interface

- Switch between different AI models as needed

grok-4-0709

grok-4-0709

- Use specific, detailed prompts for better responses (How to use Prompt Library)

- Create AI agents with custom instructions for repeated tasks (How to create AI Agents)

- Use plugins to extend xAI capabilities (How to use plugins)

- Upload documents and images directly to chat for AI analysis and discussion (Chat with documents)

4. Monitor Your AI Usage and Costs

One of the biggest advantages of using API keys with TypingMind is cost transparency and control. Unlike fixed subscriptions, you pay only for what you actually use. Visit https://console.x.ai/team/default/usage to monitor your xAI API usage and set spending limits.

| Feature | xAI Subscription Plans | Using xAI API Keys |

|---|---|---|

| Cost Structure | ❌ Fixed monthly fee Pay even if you don't use it SuperGrok:$30/month or $300/year | ✅ Pay only for actual usage $0 when you don't use it |

| Usage Limits | ❌ Hard daily/hourly caps You have to wait for the next period to use it again | ✅ Unlimited usage No limits. Only limited by your budget |

| Model Access | ❌ Platform decides available models Old models get discontinued | ✅ Access to all API models Including older & specialized versions |

- Use less expensive models for simple tasks

- Keep prompts concise but specific to reduce token usage

- Use TypingMind's prompt caching to reduce repeat costs (How to enable prompt caching)

- Using RAG (retrieval-augmented generation) for large documents to reduce repeat costs (How to use RAG)